💡 Why NARU Doesn’t Use Algorithms (And Why That Makes Learning Better)

Greetings, Trailblazers! 🌟

Welcome to the 17th edition of the NARU Newsletter! Today, we're exploring an interesting side of our platform - why we don't use algorithms like our social media counterparts and how that actually makes learning outcomes better for learning communities.

Every major platform you use runs on algorithms.

Instagram shows you content “curated just for you.” TikTok’s For You page is algorithmically optimised to keep you scrolling. LinkedIn suggests connections based on mysterious calculations. Even learning platforms like Coursera recommend courses through algorithmic predictions of what you might want next.

NARU doesn’t do this. And that’s intentional.

We don’t use algorithms to match accountability partners, recommend content, surface popular posts, or decide what you see. This isn’t because we lack the technical capability; it’s because algorithms fundamentally undermine the kind of learning that actually drives transformation.

This decision makes us different from nearly every platform in existence. It’s counter-cultural in a world that worships optimisation and personalisation. But after watching what algorithms have done to Gen Z’s mental health, attention spans, and ability to form genuine relationships, we believe learning communities need a fundamentally different approach.

This article explains why NARU deliberately avoids algorithms, what we do instead, and why human-centred design beats machine optimisation when the goal is real transformation, not just engagement metrics.

The Algorithm Problem: Designed for Addiction, Not Learning

What Algorithms Actually Optimise For

When platforms say their algorithm “personalises your experience,” here’s what they really mean:

Algorithms are designed to maximise engagement.

That’s it. Not your learning. Not your growth. Not your wellbeing. Just time on platform, clicks, and interactions, because those metrics drive advertising revenue.

Research from Emory University found that algorithms are “developed to hold our attention and drive ad revenue”, and when what holds attention exacerbates loneliness, depression, or anxiety, these negative feelings “get ramped up by increased engagement with these platforms.”

The Feedback Loop:

Algorithm shows content that triggers emotion (outrage, envy, FOMO, curiosity)

You engage (click, comment, share, scroll)

Algorithm learns what keeps you hooked

Serves more of that content

Repeat until you’re addicted

Studies show approximately 24.4% of adolescents meet criteria for social media addiction, with compulsive engagement associated with increased anxiety, depression, and attention disorders. This isn’t accidental; it’s the system working exactly as designed.

Photo by Marco Palumbo on Unsplash

The Mental Health Cost

The impact on Gen Z is particularly severe:

Anxiety Epidemic: Starting between 2010 and 2012, when algorithmic social media became central to teen life, there was an inflection point showing spiking levels of loneliness, depressive symptoms, and diagnosed anxiety. By 2021, almost one-third of high school students had experienced poor mental health within the past month.

Comparison Culture: Algorithms amplify idealised aesthetics and perfection. Gen Z sees AI-generated images of flawless bodies, impossibly curated lives, and achievements that feel unattainable. Mental health professionals state that constant exposure to these algorithmically surfaced standards undermines self-esteem.

Fragmented Attention: The algorithms fragment attention and prevent sustained, embodied awareness necessary for genuine emotional processing, training brains toward reactivity rather than reflection.

Echo Chambers: TikTok’s algorithm feeds users content aligned with their interests and anxieties, a teen watching one anxiety video soon finds themselves inundated with clips about panic disorders or PTSD, creating cycles of hyper-awareness and self-doubt.

McKinsey research found negative effects are greatest for Gen Zers who spend more than two hours daily on social media, and yet they’re the generation spending the most time there, caught in the algorithmic trap.

Photo by Lance Reis on Unsplash

Why Algorithms Fail for Learning Communities

Beyond general mental health harms, algorithms specifically undermine what makes learning communities effective:

1. Algorithms Reward Volume Over Value

What Gets Amplified:

Posts with high engagement (likes, comments, shares)

Content that triggers emotional reactions

Frequent posters who game the system

Viral moments and hot takes

What Gets Buried:

Thoughtful reflections that took time to write

Vulnerable sharing about struggles

Patient progress updates without drama

Deep learning that doesn’t go viral

In algorithmic systems, students learn to optimise for engagement rather than learning. They post what gets likes, not what represents genuine progress. This transforms learning communities into performance spaces where authenticity takes a back seat to virality.

2. Algorithms Create Comparison Anxiety

When algorithms surface “top contributors” or “most popular posts,” they create hierarchies that trigger comparison:

“Why does their progress post have 50 likes and mine has 3?”

“Am I falling behind because the algorithm doesn’t show my work?”

“Maybe I shouldn’t share my struggles, nobody wants to see that”

Exposure to highly curated or emotionally charged content can contribute to increased anxiety, depression, body image concerns, and social comparison. For Gen Z learners already struggling with mental health, algorithmic platforms add another layer of pressure.

Learning requires vulnerability, admitting what you don’t know, sharing mistakes, asking “dumb” questions. Algorithms punish vulnerability by burying it in favour of polished success stories. This is the opposite of what learning communities need.

3. Algorithms Fragment Community Cohesion

In cohort-based learning, everyone should move through the journey together. But algorithms personalise feeds, meaning:

Students see different content based on their engagement patterns

Shared experiences become individualised feeds

Cohort unity fractures into personalised silos

“What did you think of X?” becomes confusing because not everyone saw X

The power of cohorts comes from synchronised progression and shared struggle. Algorithms destroy this by fragmenting the collective experience into millions of personalised micro-experiences.

4. Algorithms Optimise for Addictive Behaviour

The unpredictable nature of algorithmic rewards encourages nomophobia (fear of being without coverage) and FOMO (fear of missing out), along with frequent check-ins and increased use. This dopamine-driven cycle is antithetical to deep learning, which requires:

Sustained focus without interruption

Time for reflection and integration

Patience with difficult concepts

Presence rather than constant checking

When students are conditioned to check their feed every few minutes for algorithmic dopamine hits, they can’t sustain the deep work that actual learning demands. Teens spend 3–6 hours daily scrolling, often disrupting sleep and reducing time for physical activity and academic performance.

5. Algorithms Undermine Genuine Relationships

Algorithmic platforms create parasocial relationships - you feel connected to people you’ve never actually interacted with because the algorithm shows you their content. But these aren’t real relationships with reciprocal support.

In learning communities, transformation comes from genuine peer accountability: people who know your name, remember your goals, notice when you’re struggling, and care about your success. Algorithms create illusions of community while enabling actual isolation.

Photo by Jay Openiano on Unsplash

What NARU Does Instead: Human-Centred Design

If algorithms don’t drive NARU, what does? Intentional structure designed by humans, for humans, based on behavioural science.

1. You Choose Your Allies (Not an Algorithm)

The Algorithmic Approach: “Our AI matches you with the perfect accountability partner based on 47 data points!”

The NARU Approach: You choose 1–3 specific people from your cohort to be your Allies for particular goals or projects.

Why This Works Better:

Autonomy: Research shows chosen relationships outperform assigned ones. When you select your Allies, you’re investing in that partnership from day one.

Context: You know who in your cohort shares your learning style, timezone, or specific goals. No algorithm can capture that nuance from data points.

Commitment: Choosing someone creates a mutual obligation. They didn’t get algorithmically assigned to you; you specifically asked them to support you. That matters psychologically.

Flexibility: As goals evolve, you can choose different Allies. No waiting for the algorithm to “re-optimise” your matches.

2. Clubs Are Human-Organised, Not Algorithmically Sorted

The Algorithmic Approach: “Based on your interests and engagement patterns, we’ve placed you in Community A with these 12 users!”

The NARU Approach: Course creators design Club structures with strategist support. Members are intentionally placed in 5–15 person micro-communities based on cohort design, not algorithmic sorting.

Why This Works Better:

Educator Expertise: The course creator or community strategist understands the learning journey and can create Clubs that support it, something no algorithm knows.

Cohort Cohesion: Clubs can be organised by cohort start date (everyone starting together), by specialisation, or by timezone. These are pedagogical decisions, not optimisation problems.

Stability: Your Club doesn’t change based on your engagement patterns. You belong to a specific community where relationships can deepen over time.

Accountability Through Belonging: When you’re in a Club of 8 people, everyone knows everyone. Your absence is noticed. This creates natural accountability that algorithms can’t replicate.

3. Content Visibility is Chronological, Not Algorithmic

The Algorithmic Approach: “We show you posts we predict you’ll engage with most, based on your behaviour!”

The NARU Approach: You see content in chronological order. Recent posts from your cohort, Club, and Allies appear based on when they were posted, not algorithmic predictions of what will keep you scrolling.

Why This Works Better:

Fairness: Everyone’s progress updates, questions, and achievements get equal visibility. No algorithmic favoritism creating winners and losers.

Authenticity: Students post genuine updates because they know the entire cohort will see them, not just whoever the algorithm decides.

Cohort Unity: Chronological feeds create a shared timeline. “Did you see Sarah’s breakthrough yesterday?” works because everyone saw the same content at the same time.

Reduced FOMO: When you check in, you see what happened since your last visit, not an endless algorithmically-optimised feed designed to keep you scrolling forever.

4. Streaks Track Consistency, Not Engagement

The Algorithmic Approach: “You’re in the top 10% of users! Keep scrolling to maintain your ranking!”

The NARU Approach: Streaks track your consistency in checking in and posting progress updates. A 72-hour grace period embodies James Clear’s “never miss twice” principle from Atomic Habits.

Why This Works Better:

Behaviour Change: Streaks reinforce the habit you’re trying to build, consistent progress on your goals, not the habit of compulsive platform checking.

Grace Period: Life happens. The 72-hour window acknowledges this without the toxic pressure of “perfect daily streaks” that cause anxiety and burnout.

Personal Metric: Your Streak is about YOUR consistency, not comparative ranking against others. This removes competitive pressure while maintaining accountability.

Learning-Focused: The system tracks whether you’re doing the work (posting progress, checking in with Allies), not whether you’re generating engagement metrics for the platform.

5. Milestones Create Collective Goals, Not Individual Competition

The Algorithmic Approach: “You’re rank #47 out of 200! Climb the leaderboard!”

The NARU Approach: Milestones are shared cohort deadlines where everyone works toward the same goal together. Success is collective, not competitive.

Why This Works Better:

Cooperation Over Competition: Instead of competing for rank, cohorts support each other toward shared achievement. This transforms pressure into support.

Synchronised Progress: Everyone reaches milestones together, maintaining the cohort model’s power of synchronised learning.

Reduces Anxiety: No public rankings. No falling behind visible others. Just clear shared goals that bring the cohort together.

Real Achievement: Milestones measure actual completion (finishing a module, delivering a project), not engagement metrics (most posts, most likes).

The Results: Why Human-Centred Beats Algorithm-Driven

Mental Health: Without algorithms creating comparison anxiety, FOMO, or compulsive checking, NARU supports the psychological safety Gen Z needs for vulnerable learning.

Genuine Relationships: When you choose your Allies and belong to a stable Club, you form real peer relationships — not algorithmic parasocial connections.

Completion Rates: Cohort-based programs on NARU see 60–80% completion rates because accountability comes from real human relationships, not gamified algorithmic incentives.

Focus: Chronological feeds reduce compulsive checking. Students engage intentionally when they have updates or questions, not because the algorithm is pulling them back in.

Authenticity: Without algorithmic favouritism, students share genuine progress, struggles and wins alike, creating the authentic community that supports transformation.

Photo by Vitaly Gariev on Unsplash

The Broader Philosophy: Technology Should Serve Humans

The decision not to use algorithms reflects a larger philosophy about technology’s role in education:

Most platforms ask: “How can we use AI/algorithms to optimise engagement and growth?”

NARU asks: “What do humans actually need to learn and transform together?”

These questions lead to fundamentally different designs.

Algorithm-Driven Platforms:

Maximise time on the platform

Personalize everything

Create addictive feedback loops

Optimize for metrics

Scale infinitely

Treat humans as data points

Human-Centred Platforms:

Support genuine relationships

Create stable structures

Build sustainable habits

Optimize for outcomes

Scale thoughtfully

Treat humans as people

We believe learning communities aren’t optimisation problems to be solved through machine learning. They’re human ecosystems requiring careful design around how people actually form relationships, maintain motivation, and transform through peer support.

Photo by Jimmy Dean on Unsplash

What This Means for Gen Z Learners

Gen Z has grown up in algorithmically-optimized environments. Nearly 3 in 5 Gen Zers spend at least one to two hours daily on social media, and 35% spend over two hours, more than any other generation. They’ve experienced firsthand how algorithms:

Create comparison anxiety

Fragment attention

Undermine authenticity

Fuel mental health challenges

Optimise for addiction over wellbeing

NARU represents a different approach, one that acknowledges Gen Z’s digital fluency while rejecting the algorithmic manipulation that’s harmed their generation.

For Gen Z, NARU offers:

Privacy: Invitation-only communities not discoverable by algorithms

Authenticity: No performing for algorithmic favour

Control: You choose your Allies, not a machine

Safety: Small Clubs where you’re known, not lost in algorithmic feeds

Presence: Chronological content supporting focus, not compulsive checking

This design acknowledges that Gen Z wants technology that enhances life, not technology that consumes it.

Photo by Vitaly Gariev on Unsplash

The Uncomfortable Truth About “Personalisation”

Platforms justify algorithms by claiming “personalisation improves user experience.” But whose experience?

Research shows algorithms are made to boost engagement, not personal well-being. The “personalised” feed isn’t customised for your growth; it’s optimised to keep you scrolling so the platform can sell more ads.

Real personalisation in learning communities looks different:

Choosing your own accountability partners based on actual compatibility

Belonging to a Club where people know your specific goals and challenges

Progressing through content at your cohort’s shared pace, not an algorithm’s prediction

Getting support from humans who remember your context, not automated recommendations

This is personalisation that serves you, not the platform’s business model.

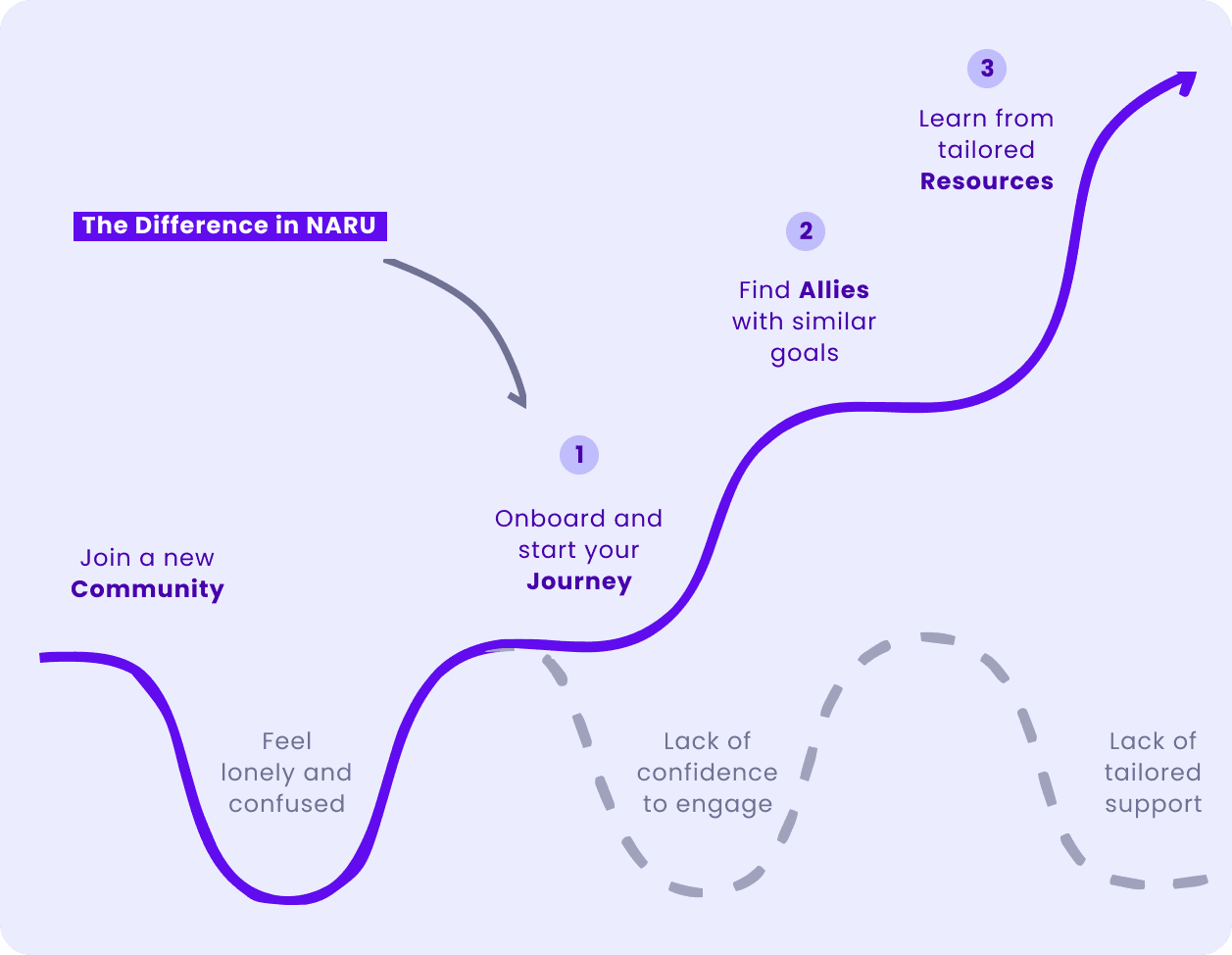

NARU Onboarding experience (NARU)

The Bottom Line: Algorithms Create Engagement. We Create Transformation.

NARU doesn’t use algorithms because our goal isn’t maximising your time on our platform, it’s maximising your likelihood of actually finishing what you start and achieving your learning goals.

We measure success differently:

Not by daily active users → by course completion rates

Not by time on platform → by skills actually developed

Not by engagement metrics → by real-world transformation

Not by algorithmic optimisation → by human relationships

This makes us weird in the tech world. Investors and growth-hackers would tell us we’re leaving money on the table by not optimising for engagement. They’re right. We’re deliberately choosing a different path.

Because here’s what we’ve learned: Algorithms are exceptional at creating engagement. They’re terrible at creating transformation. And if you’re building a learning community, you have to decide which you’re optimising for.

We choose transformation. Every time.

Ready for Learning Communities Without Algorithmic Manipulation?

NARU is built for course creators and learners who believe:

Real relationships beat algorithmic recommendations

Chronological feeds support focus better than engineered addictiveness

Chosen accountability partners outperform AI matching

Collective progress matters more than individual rankings

Mental health shouldn’t be sacrificed for engagement metrics

For Gen Z learners tired of algorithm-driven anxiety and comparison culture, NARU offers privacy-protected spaces where genuine peer relationships, not machine optimisation, drive growth.

For course creators who measure success by completion rates and student transformation rather than engagement metrics, NARU provides the human-centred accountability structures that actually work.

Visit naru.com.au to build learning communities where technology serves humans, not the other way around.

Stay healthy & gold,

Michelle EW

Co-founder & Product @ NARU